We are currently witnessing a massive shift in how software is created. The old way of doing things involved wizened, expensive engineers who spent decades learning the "pipes" of a system. Today, they are being replaced by "AI natives" who use vibe coding to prompt entire features into existence in minutes. It feels like a revolution in productivity, but it is actually creating a phenomenon called Dark Code.

Dark Code is software that works today, passes its tests, and satisfies the prompt, but it exists in a total vacuum of human understanding. It was never part of a standard build process. It has no provenance. No one actually knows why it works or what specific dependencies it relies on. We are essentially "YOLOing" code into production and hoping the vibes stand the test of time.

The danger here is not just technical; it is legal and financial. In the old days, if a system crashed, you could call in the senior architect who understood the core logic. Today, that architect has been laid off. In their place is a youngster who is brilliant at prompting but does not understand the weight of liability. They may not even be able to spell liability, much less know what it is.

When that AI generated code is touching financial transactions, HIPAA protected health data, or sensitive PII, who is actually accountable? It is not the model, and it certainly isn't the model's creator. The "AI native" might have prompted the code into existence, but they often don't have the depth to understand the regulatory landmines hidden in a 500 line response of generated code. They have created a system that they cannot explain and, more importantly, they cannot maintain when the underlying AI model changes tomorrow.

To see the real world consequences of this approach, we only have to look at recent headlines. Amazon reportedly reduced its workforce by 16,000 engineers while leaning heavily into AI automation. Shortly after, the company faced three significant service disruptions in just five months. The cause was traced back to AI induced issues that lacked sufficient human oversight.

The result was a total pivot. Amazon had to mandate that humans review all code again. They realized that you cannot run a global infrastructure on Dark Code. When you lose the "Human in the Loop" who actually comprehends the logic, you lose the ability to react when the system inevitably breaks. You enter a compounding spiral of problems where each AI "fix" only adds more layers of Dark Code that no one understands.

The only way to avoid this spiral is to move from vibe coding to vibe assembly. Instead of letting an AI hallucinate raw logic, we force it to work with a catalog of pre-approved, self-describing components.

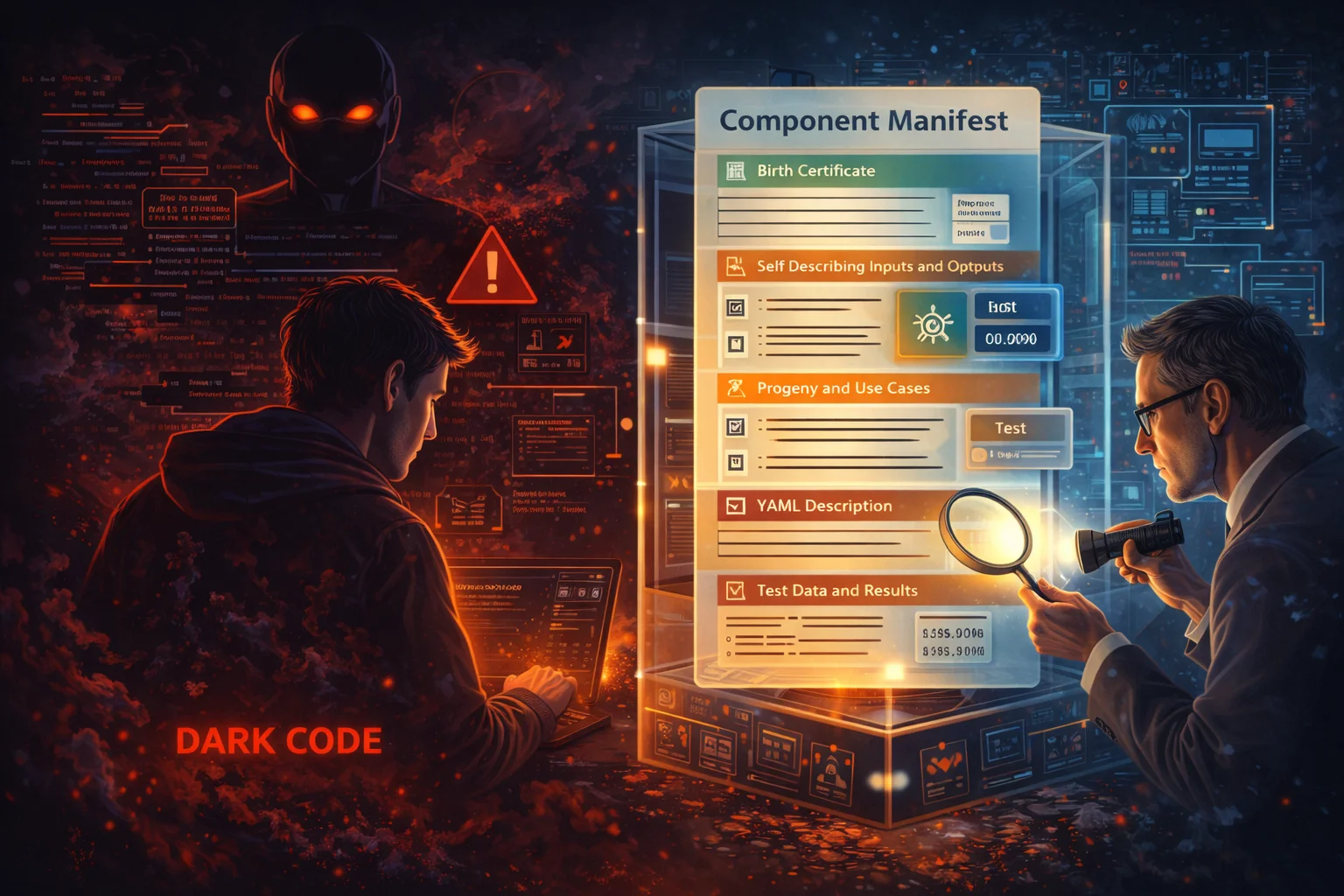

The secret weapon here is the Component Manifest. Unlike a dark code blob, an assembled component comes with a digital birth certificate that includes:

When you use assembly, you are creating a "Glass Box." If a component fails or a dependency changes, you don't have to guess. You can look at the manifest and understand the exact blast radius of the problem. You are giving the AI the building blocks it needs, while giving the humans the visibility they require to stay in control.

The deficiency of vibe coding is that it ignores the dimension of time. It solves for "now" without any consideration for "tomorrow." It produces Dark Code that is unmaintainable, legally indefensible, and structurally opaque.

Vibe assembly solves this by making the core of the system readable to both humans and AI. It replaces the "YOLO" mentality with a structured, manifest driven approach that protects the company’s crown jewels. By using a catalog of pre-approved components, you ensure that even when the AI natives are at the helm, the system remains anchored in deterministic engineering. You get the speed of the future without sacrificing the accountability of the past.

Are you building a legacy of Dark Code, or are you assembling a system that can stand the light of a regulatory audit?